| Panel | |

|---|---|

|

Disclaimer

Your use of this download is governed by Stonebranch’s Terms of Use, which are available at https://www.stonebranch.com/integration-hub/Terms-and-Privacy/Terms-of-Use/

Overview

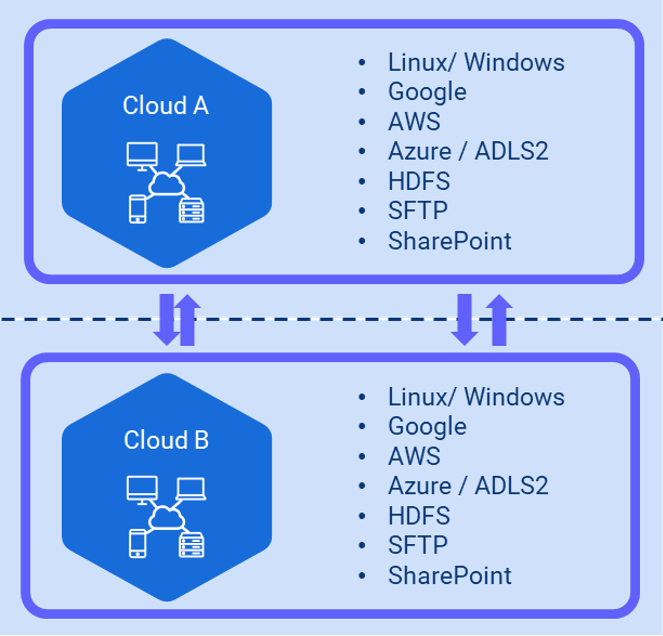

The Inter-Cloud Data Transfer integration allows you to transfer data to, from, and between any of the major private and public cloud providers like AWS, Google Cloud, and Microsoft Azure.

It also supports the transfer of data to and from a Hadoop Distributed File System (HDFS) and to major cloud applications like OneDrive and SharePoint.

An advantage of using the Inter-Cloud Data Transfer integration over other approaches is that data is streamed from one object store to another without the need for intermediate storage.

Integrations with this solution package include:

- AWS S3

- Google Cloud

- Sharepoint

- Dropbox

- OneDrive

- Hadoop Distributed File Storage (HDFS)

Software Requirements

Software Requirements for Universal Agent

Universal Agent for Linux or Windows Version 7.0.0.0 or later is required.

Universal Agent needs to be installed with python option (--python yes).

Software Requirements for Universal Controller

Universal Controller 7.0.0.0 or later.

Software Requirements for the Application to be Scheduled

...

Rclone: v1.57.1 or higher needs to be installed on server where the Universal Agent is installed.

...

Rclone can be installed on Windows and Linux

...

To install Rclone on Linux systems, run:

| Code Block | ||||

|---|---|---|---|---|

| ||||

curl https://rclone.org/install.sh | sudo bash |

| Note | ||

|---|---|---|

| ||

If the URL is not reachable from your server, the Linux installation also can be done from pre-compiled binary. |

To install Rclone on Linux system from a pre-compiled binary

Fetch and unpack

| Code Block | ||||

|---|---|---|---|---|

| ||||

curl -O <https://downloads.rclone.org/rclone-current-linux-amd64.zip>

unzip rclone-current-linux-amd64.zip

cd rclone-*-linux-amd64 |

Copy binary file

| Code Block | ||||

|---|---|---|---|---|

| ||||

sudo cp rclone /usr/bin/

sudo chown root:root /usr/bin/rclone

sudo chmod 755 /usr/bin/rclone |

...

| Warning | ||

|---|---|---|

| ||

It is replaced by: |

| Panel | |

|---|---|

|

Disclaimer

Your use of this download is governed by Stonebranch’s Terms of Use, which are available at https://www.stonebranch.com/integration-hub/Terms-and-Privacy/Terms-of-Use/

Overview

The Inter-Cloud Data Transfer integration allows you to transfer data to, from, and between any of the major private and public cloud providers like AWS, Google Cloud, and Microsoft Azure.

It also supports the transfer of data to and from a Hadoop Distributed File System (HDFS) and to major cloud applications like OneDrive and SharePoint.

An advantage of using the Inter-Cloud Data Transfer integration over other approaches is that data is streamed from one object store to another without the need for intermediate storage.

Integrations with this solution package include:

- AWS S3

- Google Cloud

- Sharepoint

- Dropbox

- OneDrive

- Hadoop Distributed File Storage (HDFS)

Software Requirements

Software Requirements for Universal Agent

Universal Agent for Linux or Windows Version 7.2.0.0 or later is required.

Universal Agent needs to be installed with python option (--python yes).

Software Requirements for Universal Controller

Universal Controller 7.2.0.0 or later.

Software Requirements for the Application to be Scheduled

Rclone: v1.58.1 or higher needs to be installed on server where the Universal Agent is installed.

Rclone can be installed on Windows and Linux

To install Rclone on Linux systems, run:

Code Block language py linenumbers true sudo mkdir -p /usr/local/share/man/man1 sudo cp rclone.1 /usr/local/share/man/man1/ sudo mandbTo install Rclone on Windows systems:

Rclone is a Go program and comes as a single binary file.

Download the relevant binary here.

Extract the rclone or rclone.exe binary from the archive into a folder, which is in the windows path

Key Features

Some details about the Inter-Cloud Data Transfer Task:

Transfer data to, from, and between any cloud provider

Transfer between any major storage applications like SharePoint or Dropbox

Transfer data to and from a Hadoop File System (HDFS)

- Data is streamed from one object store to another (no intermediate storage)

Very Fast, if the object stores are in the same region

Preserves always timestamps and verifies checksums

Supports encryption, caching, compression, chunking

Perform Dry-runs

Regular Expression based include/exclude filter rules

Supported actions are:

List objects, List directory,

Copy/ Move

Remove object / object store

Perform Dry-runs

Monitor object

Copy URL

Import Inter-Cloud Data Transfer Downloadable Universal Template

To use this downloadable Universal Template, you first must perform the following steps:

This Universal Task requires the /wiki/spaces/UC71x/pages/5178443 feature. Check that the/wiki/spaces/UC71x/pages/5177877 system property has been set to true.

Copy or Transfer the Universal Template file to a directory that can be accessed by the Universal Controller Tomcat user.

In the Universal Controller UI, select Configuration > Universal Templates to display the current list of /wiki/spaces/UC71x/pages/5178054.

Right-click any column header on the list to display an Action menu.

Select Import from the menu, enter the directory containing the Universal Template file(s) that you want to import, and click OK.

When the files have been imported successfully, the Universal Template will appear on the list.

Configure Inter-Cloud Data Transfer Universal Tasks

To configure a new Inter-Cloud Data Transfer there are two steps required:

Configure the connection file

Create a new Inter-Cloud Data Transfer Task

...

curl https://rclone.org/install.sh | sudo bashNote title Note If the URL is not reachable from your server, the Linux installation also can be done from pre-compiled binary.

To install Rclone on Linux system from a pre-compiled binary:

Fetch and unpack

Code Block language py linenumbers true curl -O <https://downloads.rclone.org/rclone-current-linux-amd64.zip> unzip rclone-current-linux-amd64.zip cd rclone-*-linux-amd64Copy binary file

Code Block language py linenumbers true sudo cp rclone /usr/bin/ sudo chown root:root /usr/bin/rclone sudo chmod 755 /usr/bin/rcloneInstall manpage

Code Block language py linenumbers true sudo mkdir -p /usr/local/share/man/man1 sudo cp rclone.1 /usr/local/share/man/man1/ sudo mandbTo install Rclone on Windows systems:

Rclone is a Go program and comes as a single binary file.

Download the relevant binary here.

Extract the rclone or rclone.exe binary from the archive into a folder, which is in the windows path

Key Features

Some details about the Inter-Cloud Data Transfer Task:

Transfer data to, from, and between any cloud provider

Transfer between any major storage applications like SharePoint or Dropbox

Transfer data to and from a Hadoop File System (HDFS)

Download a URL's content and copy it to the destination without saving it in temporary storage

- Data is streamed from one object store to another (no intermediate storage)

- Horizontal scalable by allowing multiple parallel file transfers up to the number of cores in the machine

- Supports Chunking - Larger files can be divided into multiple chunks

Preserves always timestamps and verifies checksums

Supports encryption, caching, and compression

Perform Dry-runs

Dynamic Token updates for SharePoint connections

Regular Expression based include/exclude filter rules

Supported actions are:

List objects

List directory

- Copy / Move

Copy To / Move To

- Sync Folders

Remove object / object store

Perform Dry-runs

Monitor object, including triggering of Tasks and Workflows

Copy URL

Import Inter-Cloud Data Transfer Universal Template

To use this downloadable Universal Template, you first must perform the following steps:

- This Universal Task requires the Resolvable Credentials feature. Check that the Resolvable Credentials Permitted system property has been set to true.

- To import the Universal Template into your Controller, follow the instructions here.

- When the files have been imported successfully, refresh the Universal Templates list; the Universal Template will appear on the list.

Configure Inter-Cloud Data Transfer Universal Tasks

To configure a new Inter-Cloud Data Transfer there are two steps required:

Configure the connection file.

Create a new Inter-Cloud Data Transfer Task.

| Anchor | ||||

|---|---|---|---|---|

|

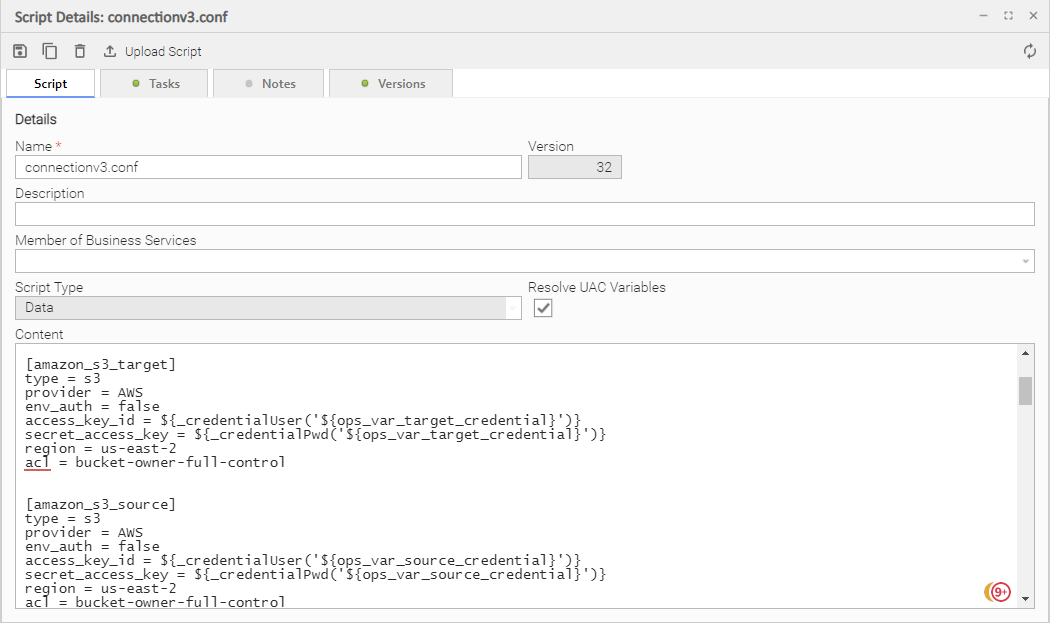

The connection file contains all required Parameters and Credentials to connect to the Source and Target Cloud Storage System.

The provided connection file, below, contains the basic connection Parameters (flags) to connect to AWS, Azure, Linux, OneDrive (SharePoint), Google and HDFS.

Additional Parameters can be added if required. Refer to the rclone documentation for all possible flags: Global Flags.

The following connection file must be saved in the Universal Controller script library. This file is later referenced in the different Inter-Cloud Data Transfer tasks.

connections.conf (v4)

| Code Block | ||||||

|---|---|---|---|---|---|---|

| ||||||

# Script Name: connectionv4.conf

#

# Description:

# Connection Script file for Inter-Cloud Data Transfer Task

#

#

# 09.03.2022 Version 1 Initial Version requires UA 7.2

# 09.03.2022 Version 2 SFTP support

# 27.04.2022 Version 3 Azure target mistake corrected

# 13.05.2022 Version 4 Copy_Url added

#

[amazon_s3_target]

type = s3

provider = AWS

env_auth = false

access_key_id = ${_credentialUser('${ops_var_target_credential}')}

secret_access_key = ${_credentialPwd('${ops_var_target_credential}')}

region = us-east-2

acl = bucket-owner-full-control

[amazon_s3_source]

type = s3

provider = AWS

env_auth = false

access_key_id = ${_credentialUser('${ops_var_source_credential}')}

secret_access_key = ${_credentialPwd('${ops_var_source_credential}')}

region = us-east-2

acl = bucket-owner-full-control

role_arn = arn:aws:iam::552436975963:role/SB-AWS-FULLX

[microsoft_azure_blob_storage_sas_source]

type = azureblob

sas_url = ${_credentialPwd('${ops_var_source_credential}')}

[microsoft_azure_blob_storage_sas_target]

type = azureblob

sas_url = ${_credentialPwd('${ops_var_target_credential}')}

[microsoft_azure_blob_storage_source]

type = azureblob

account = ${_credentialUser('${ops_var_source_credential}')}

key = ${_credentialPwd('${ops_var_source_credential}')}

[microsoft_azure_blob_storage_target]

type = azureblob

account = ${_credentialUser('${ops_var_target_credential}')}

key = ${_credentialPwd('${ops_var_target_credential}')}

[datalakegen2_storage_source]

type = azureblob

account = ${_credentialUser('${ops_var_source_credential}')}

key = ${_credentialPwd('${ops_var_source_credential}')}

[datalakegen2_storage_target]

type = azureblob

account = ${_credentialUser('${ops_var_target_credential}')}

key = ${_credentialPwd('${ops_var_target_credential}')}

[datalakegen2_storage_sp_source]

type = azureblob

account = ${_credentialUser('${ops_var_source_credential}')}

service_principal_file = ${_scriptPath('azure-principal.json')}

# service_principal_file = C:\virtual_machines\Setup\SoftwareKeys\Azure\azure-principal.json

[datalakegen2_storage_sp_target]

type = azureblob

account = ${_credentialUser('${ops_var_target_credential}')}

service_principal_file = ${_scriptPath('azure-principal.json')}

# service_principal_file = C:\virtual_machines\Setup\SoftwareKeys\Azure\azure-principal.json

[google_cloud_storage_source]

type = google cloud storage

service_account_file = ${_credentialPwd('${ops_var_source_credential}')}

object_acl = bucketOwnerFullControl

project_number = clagcs

location = europe-west3

[google_cloud_storage_target]

type = google cloud storage

service_account_file = ${_credentialPwd('${ops_var_target_credential}')}

object_acl = bucketOwnerFullControl

project_number = clagcs

location = europe-west3

[onedrive_source]

type = onedrive

token = ${_credentialToken('${ops_var_source_credential}')}

drive_id = ${_credentialUser('${ops_var_source_credential}')}

drive_type = business

update_credential = token

[onedrive_target]

type = onedrive

token = ${_credentialToken('${ops_var_target_credential}')}

drive_id = ${_credentialUser('${ops_var_target_credential}')}

drive_type = business

update_credential = token

[hdfs_source]

type = hdfs

namenode = 172.18.0.2:8020

username = maria_dev

[hdfs_target]

type = hdfs

namenode = 172.18.0.2:8020

username = maria_dev

[linux_source]

type = local

[linux_target]

type = local

[windows_source]

type = local

[windows_target]

type = local

[sftp_source]

type = sftp

host = 3.19.76.58

user = ubuntu

pass = ${_credentialToken('${ops_var_source_credential}')}

[sftp_target]

type = sftp

host = 3.19.76.58

user = ubuntu

pass = ${_credentialToken('${ops_var_target_credential}')}

[copy_url]

|

connection.conf in the Universal Controller script library

Considerations

Rclone supports connections to almost any storage system on the market:

Overview of Cloud Storage Systems

However, the current Universal Task has been tested only for the following storage types:

LINUX

AWS S3

Azure Blob Storage

Google GCS

Microsoft One Drive, including SharePoint

HDFS

HTTPS URL

- SFTP

| Note | ||

|---|---|---|

| ||

If you want to connect to a different system (for example, Dropbox), contact Stonebranch for support. |

Create an Inter-Cloud Data Transfer Task

For Universal Task Inter-Cloud Data Transfer, create a new task and enter the task-specific Details that were created in the Universal Template.

The following Actions are supported:

| Action | Description |

list directory | List directories; for example,

|

copy | Copy objects from source to target. |

| copyto | Copies a single object from source to target and allows to rename the object on the target. |

move | Move objects from source to target. |

| moveto | Moves a single object from source to target and allows to rename the object on the target. |

| sync | Sync the source to the destination, changing the destination only. |

list objects | List objects in an OS directory or cloud object store. |

remove-object | Remove objects in an OS directory or cloud object store. |

remove-object-store | Remove an OS directory or cloud object store. |

create-object-store | Create an OS directory or cloud object store. |

copy-url | Download a URL's content and copy it to the destination without saving it in temporary storage. |

monitor-object | Monitor a file or object in an OS directory or cloud object store and, optionally, launch Task(s) when an object is identified by the monitor. |

In the following for each task action, the fields will be described and an example is provided.

Important Considerations

Before running a move, moveto, copy, copyto or sync command, you can always try the command by setting the Dry-run option in the Task.

The field

max-depth(recursion depth) limits the recursion depth.max-depth 1means that only the current directory is in scope. This is the default value.Note title Attention If you change max-depth to a value greater than 1, a recursive action is performed. This should be considered in any Copy, Move, sync, remove-object and remove-object-store operations.

Inter-Cloud Data Transfer Actions

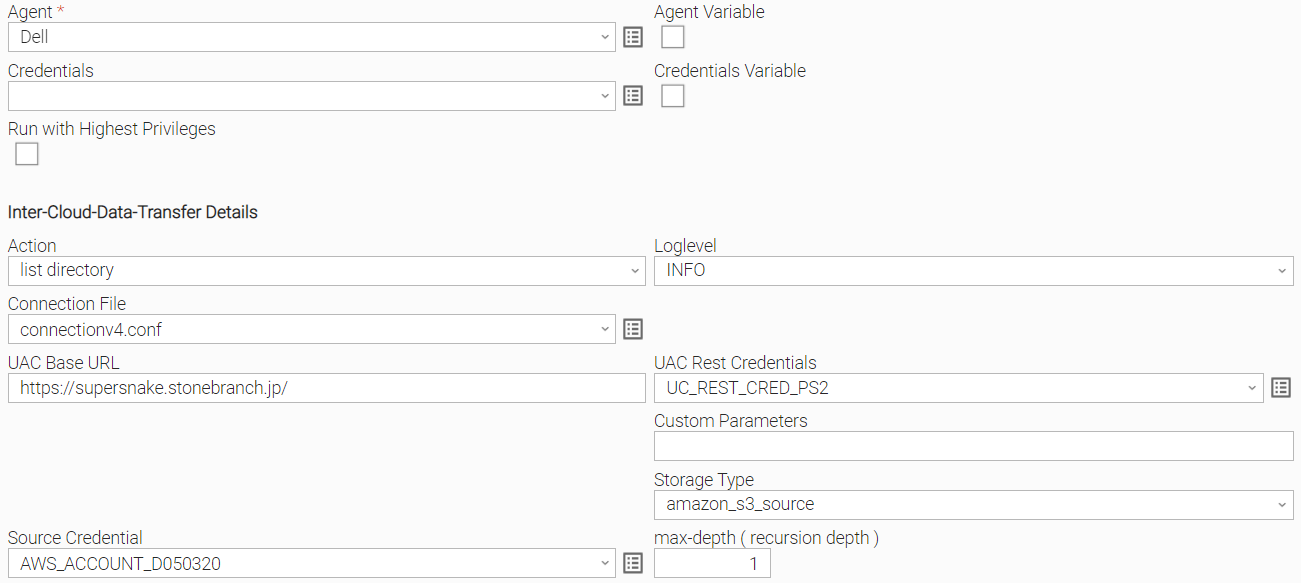

Action: list directory

| Field | Description |

Agent | Linux or Windows Universal Agent to execute the Rclone command line. |

Agent Cluster | Optional Agent Cluster for load balancing. |

Action | [ list directory, copy, list objects, move, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] list directories; for example,

|

Storage Type | Select the storage type:

|

| Source Credential | Credential used for the selected Storage Type. |

Connection File | In the connection file, you configure all required Parameters and Credentials to connect to the Source and Target Cloud Storage System. For example, if you want to transfer a file from AWS S3 to Azure Blob Storage, you must configure the connection Parameters for AWS S3 and Azure Blob Storage. For details on how to configure the Connection File, refer to section 137232401. |

UAC Rest Credentials | Universal Controller Rest API Credentials. |

UAC Base URL | Universal Controller URL; for example, https://192.168.88.40/uc. |

Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL]. |

| Other Parameters | This field can be used to apply additional flag parameters to the selected action. For a list of all possible flags, refer to Global Flags. Recommendation: Add the flag max-depth 1 to avoid a recursive action. |

max-depth ( recursion depth ) | limits the recursion depth

|

Example

The following example list all aws s3 buckets in the AWS account configured in the connection.conf file.

No sub-directories are displayed, because max-depth is set to 1.

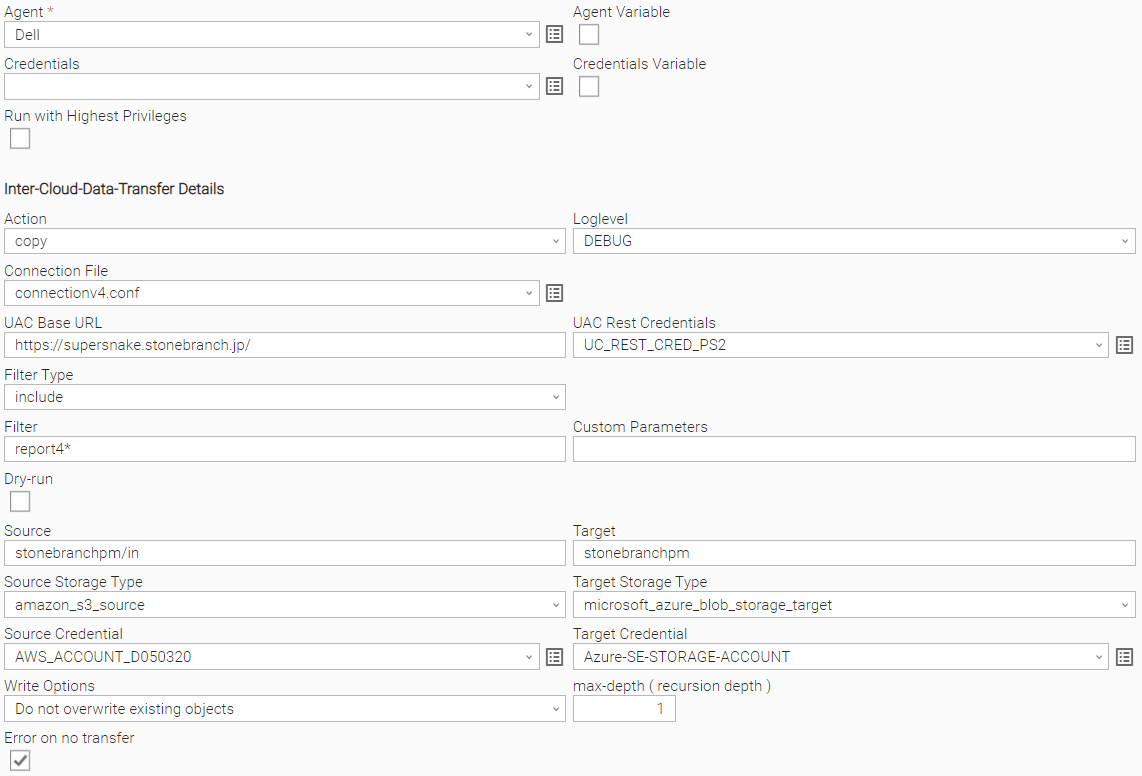

Action: copy

Before running a move, moveto, copy, copyto or sync command, you can always try the command by setting the Dry-run option in the Task.

| Field | Description |

Agent | Linux or Windows Universal Agent to execute the Rclone command line. |

Agent Cluster | Optional Agent Cluster for load balancing. |

Action | [ list directory, copy, copyto, list objects, move, moveto, sync, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] Copy objects from source to target. |

| Write Options | Do not overwrite existing objects, Replace existing objects, Create new with timestamp if exists, Use Custom Parameters: Do not overwrite existing objects Objects on the target with the same name will not be overwritten. Replace existing objects Objects on the target with the same name will not be overwritten - even if the same object exists on the target. Create new with timestamp if exists If an Object on the target with the same name exists the object will be duplicated with a timestamp added to the name. The file extension will be kept:

For example: report1.txt exists on the target, then a new file with a timestamp as postfix will be created on the target; for example, report1_20220513_093057.txt. Replace existing objects Objects are always overwritten, even if same object exists already on the target. Use Custom Parameters Only the parameters in the field “Customer Parameters” will be applied (other Parameters will be ignored) |

| error-on-no-transfer | The error-on-no-transfer flag lets the task fail in case no transfer was done. |

Source Storage Type | Select a source storage type:

|

Target Storage Type | Select the target storage type:

|

Connection File | In the connection file you configure all required Parameters and Credentials to connect to the Source and Target Cloud Storage System. For example, if you want to transfer a file from AWS S3 to Azure Blob Storage, you must configure the connection Parameters for AWS S3 and Azure Blob Storage. For details on how to configure the Connection File, refer to section 137232401. |

Filter Type | [ include, exclude, none ] Define the type of filter to apply. |

Filter | Provide the Patterns for matching file matching; for example, in a copy action: Filter Type: include

For more examples on the filter matching pattern, refer to Rclone Filtering. |

Other Parameters | This field can be used to apply additional flag parameters to the selected action. For a list of all possible flags, refer to Global Flags. |

Dry-run | [ checked , unchecked ] Do a trial run with no permanent changes. |

UAC Rest Credentials | Universal Controller Rest API Credentials |

UAC Base URL | Universal Controller URL For example, https://192.168.88.40/uc |

Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL] |

max-depth ( recursion depth ) | limits the recursion depth

Attention: If you change max-depth to a value greater than 1, a recursive action is performed. |

Example

The following example copies all file starting with report4 from the amazon s3 bucket stonebranchpm folder in to the Azure container: stonebranchpm.

No recursive copy will be done, because the flag --max-depth 1 is set.

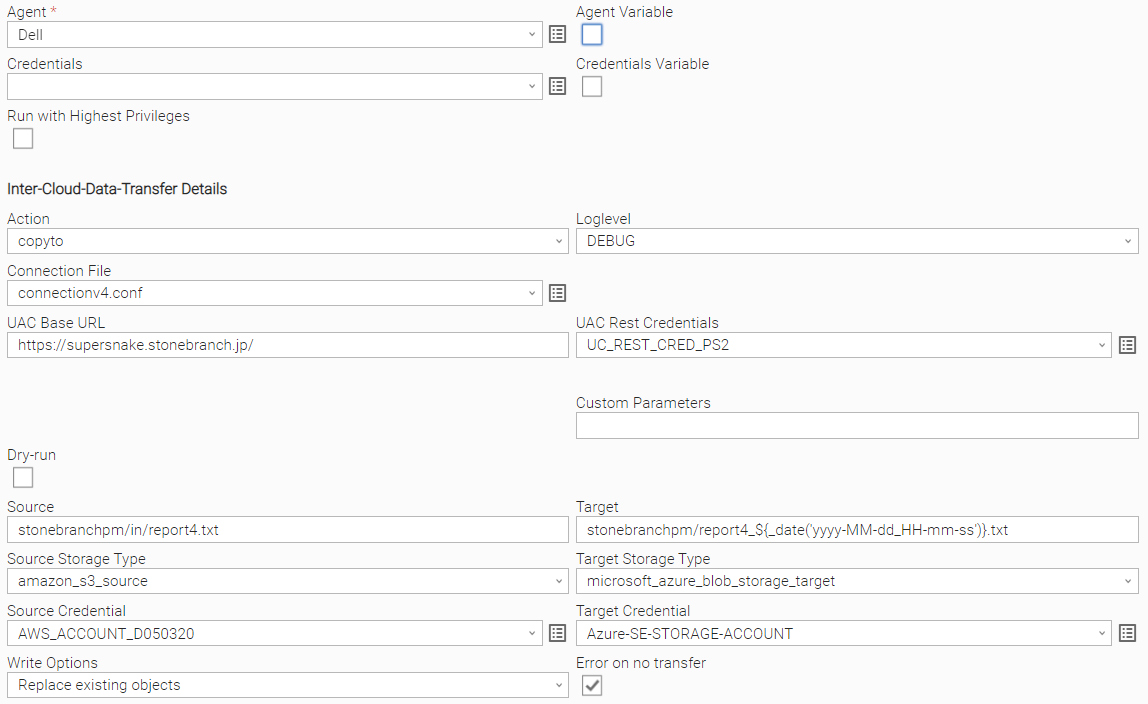

Action: copyto

The Action copyto copies a single object from source to target and allows you to rename the object on the target.

Before running a move, moveto, copy, copyto, or sync command, you can always try the command by setting the Dry-run option in the Task.

Field | Description |

Agent | Linux or Windows Universal Agent to execute the Rclone command line. |

Agent Cluster | Optional Agent Cluster for load balancing |

Action | [ list directory, copy, copyto, list objects, move, moveto, sync, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] copyto copies an objects from source to target and optionally renames the file on the target. |

Write Options | [Do not overwrite existing objects, Replace existing objects, Create new with timestamp if exists, Use Custom Parameters} Do not overwrite existing objects Objects on the target with the same name will not be overwritten. Replace existing objects Objects on the target with the same name will not be overwritten - even if the same object exists on the target. Create new with timestamp if exists If an Object on the target with the same name exists the object will be duplicated with a timestamp added to the name. The file extension will be kept:

For example, report1.txt exists on the target, then a new file with a timestamp as postfix will be created on the target; for example, report1_20220513_093057.txt. Replace existing objects Objects are always overwritten, even if same object exists already on the target. Use Custom Parameters Only the parameters in the field “Customer Parameters” will be applied (other Parameters will be ignored) |

error-on-no-transfer | The error-on-no-transfer flag let the task fail in case no transfer was done. |

Source Storage Type | Select a source storage type:

|

Target storage Type | Select the target storage type:

|

Connection File | In the connection file, configure all required Parameters and Credentials to connect to the Source and Target Cloud Storage System. For example, if you want to transfer a file from AWS S3 to Azure Blob Storage, you must configure the connection Parameters for AWS S3 and Azure Blob Storage. For details on how to configure the Connection File, refer to section Configure the connection file. |

Other Parameters | This field can be used to apply additional flag parameters to the selected action. For a list of possible flags, refer to https://rclone.org/flags/. |

Dry-run | [ checked , unchecked ] Do a trial run with no permanent changes. |

UAC Rest Credentials | Universal Controller Rest API Credentials |

UAC Base URL | Universal Controller URL For example, https://192.168.88.40/uc |

Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL] |

Example

The following example copies the file report4.txt from the amazon s3 bucket stonebranchpm folder in to the azure container stonebranchpm.

The file will be renamed at the target to: stonebranchpm/report4_${_date('yyyy-MM-dd_HH-mm-ss')}.txt; for example report4_2022-05-16_08-00-29.txt

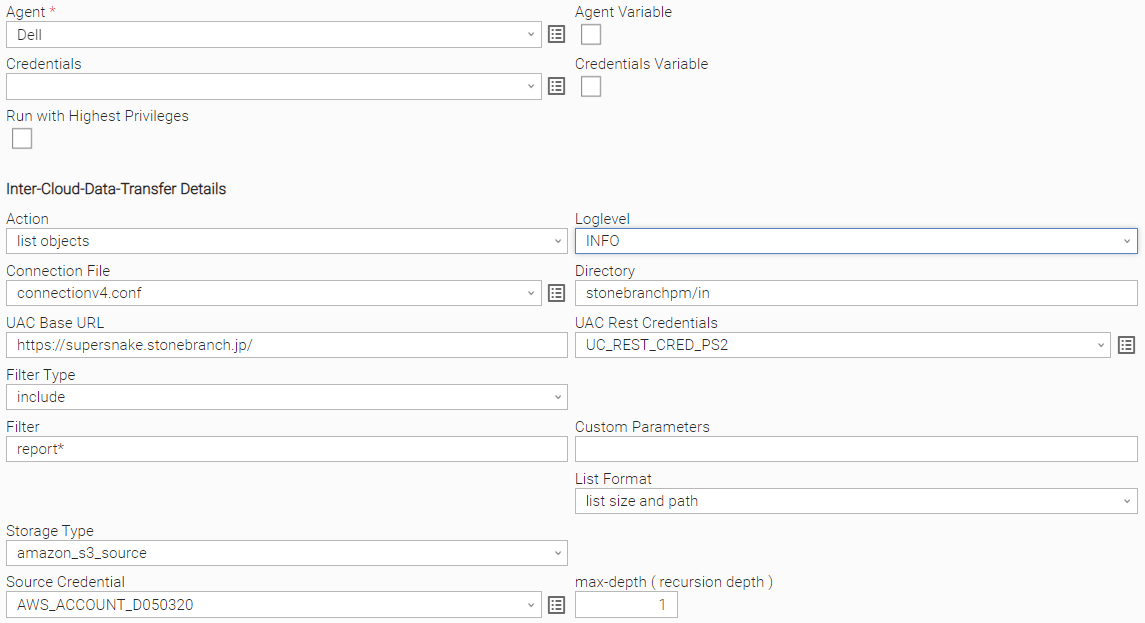

Action: list objects

| Field | Description |

Agent | Linux or Windows Universal Agent to execute the Rclone command line. |

Agent Cluster | Optional Agent Cluster for load balancing. |

Action | [ list directory, copy, copyto, list objects, move, moveto, sync, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] List objects in an OS directory or cloud object store. |

| Storage Type | Select the storage type:

|

| Source Credential | Credential used for the selected Storage Type. |

Connection File | In the connection file you configure all required Parameters and Credentials to connect to the Source and Target Cloud Storage System. For example, if you want to transfer a file from AWS S3 to Azure Blob Storage, you must configure the connection Parameters for AWS S3 and Azure Blob Storage. For details on how to configure the Connection File, refer to section 137232401. |

Filter Type | [ include, exclude, none ] Define the type of filter to apply. |

Filter | Provide the Patterns for matching file matching; for example, in a copy action: Filter Type: include

For more examples on the filter matching pattern, refer to Rclone Filtering. |

Other Parameters | This field can be used to apply additional flag parameters to the selected action. For a list of all possible flags, refer to Global Flags. |

| Directory | Name of the directory you want to list the files in. For example, Directory: stonebranchpm/out would mean to list all objects in the bucket stonebranchpm folder out. |

| List Format | [ list size and path, list modification time, size and path, list objects and directories, list objects and directories (Json) ] The Choice box specifies how the output should be formatted. |

UAC Rest Credentials | Universal Controller Rest API Credentials. |

UAC Base URL | Universal Controller URL. For example, https://192.168.88.40/uc |

Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL]. |

max-depth ( recursion depth ) | limits the recursion depth

|

Example

The following example lists all objects starting with report in the S3 bucket stonebranchpm.

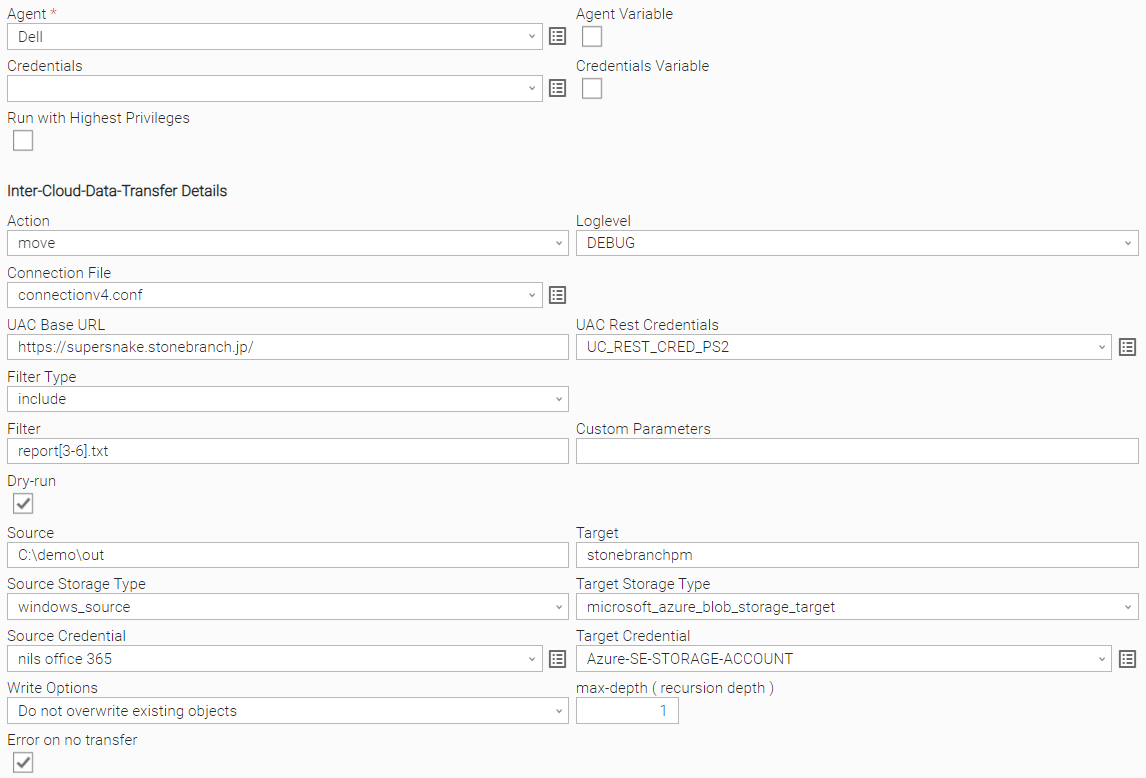

Action: move

| Field | Description |

Agent | Linux or Windows Universal Agent to execute the Rclone command line. |

Agent Cluster | Optional Agent Cluster for load balancing. |

Action | [ list directory, copy, copyto, list objects, move, moveto, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] move objects from source to target |

| error-on-no-transfer | The error-on-no-transfer flag lets the task fail in case no transfer was done. |

Source Storage Type | Select a source storage type:

|

Target storage Type | Select the target storage type:

|

Connection File | In the connection file you configure all required Parameters and Credentials to connect to the Source and Target Cloud Storage System |

...

. For example, if you want to transfer a file from AWS S3 to Azure Blob Storage, you must configure the connection Parameters for AWS S3 and Azure Blob |

...

The connection file must be saved in the Universal Controller script library; for example, cloud2cloud.conf

Creation of the Connection File

The connection can be created manually by taking the sample connection file cloud2cloud.conf as template or interactively using the rclone config tool: rclone config.

If you do not want to show, in clear text, secret keys and password in the connection file, a Universal Controller credential could be used in the script. For example, if you want to encrypt the amazon s3 secret_access_key, you could set up a Universal Controller credential: AWS_SECRET_ACCESS_KEY_<D050320> and reference this credential in the script:

| Code Block | ||

|---|---|---|

| ||

secret_access_key = ${_credentialPwd('AWS_SECRET_ACCESS_KEY_D050320')} |

Considerations

Rclone supports connections to almost any storage system on the market:

Overview of Cloud Storage Systems

However, the current Universal Task has only been tested for the following storage types:

LINUX

AWS S3

Azure Blob Storage

Google GCS

Microsoft One Drive incl. Share Point

HDFS

HTTPS URL

| Note | ||

|---|---|---|

| ||

If you want to connect to a different system, (for example, Dropbox), you should test this before taking it to production. |

Create a New Inter-Cloud Data Transfer Task

For Universal Task Inter-Cloud Data Transfer, create a new task and enter the task-specific Details that were created in the Universal Template.

The following Actions are supported:

...

list directory

...

List directories; for example,

- List object stores like S3 buckets, Azure container.

- List OS directories from Linux, Windows, HDFS.

...

copy

...

Copy objects from source to target.

...

list objects

...

List objects in an OS directory or cloud object store.

...

move

...

Move objects from source to target.

...

remove-object

...

Remove objects in an OS directory or cloud object store.

...

remove-object-store

...

Remove an OS directory or cloud object store.

...

create-object-store

...

Create an OS directory or cloud object store.

...

copy-url

...

Download a URL's content and copy it to the destination without saving it in temporary storage.

...

monitor-object

...

Monitor a file or object in an OS directory or cloud object store.

In the following for each task action, the fields will be described and an example is provided.

Inter-Cloud Data Transfer Actions

Action: list directory

| Field | Description |

Agent | Linux or Windows Universal Agent to execute the Rclone command line. |

Agent Cluster | Optional Agent Cluster for load balancing. |

Action | [ list directory, copy, list objects, move, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] list directories; for example,

|

Storage Type | Enter a storage Type name as defined in the Connection File; for example, amazon_s3, microsoft_azure_blob_storage, hdfs, onedrive, linux .. For a list of all possible storage types, refer to Overview of Cloud Storage Systems.Storage. For details on how to configure the Connection File, refer to section 137232401. |

Filter Type | [ include, exclude, none ] Define the type of filter to apply. |

Filter | Provide the Patterns for matching file matching; for example, in a copy action: Filter Type: include

For more examples on the filter matching pattern, refer to Rclone Filtering. |

Other Parameters | This field can be used to apply additional flag parameters to the selected action. For a list of all possible flags, refer to Global Flags. |

Dry-run | [ checked , unchecked ] Do a trial run with no permanent changes. |

UAC Rest Credentials | Universal Controller Rest API Credentials. |

UAC Base URL | Universal Controller URL. For example, https://192.168.88.40/uc |

Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL]. |

max-depth ( recursion depth ) | limits the recursion depth

Attention: If you change max-depth to a value greater than 1, a recursive action is performed. |

Example

The following example moves the objects matching report[3-6].txt from the Windows source directory C:\demo\out to the target Azure container stonebranchpm.

The windows Source is the server, where the Universal Agent is installed.

No recursive copy will be done, because max-depth is set to 1.

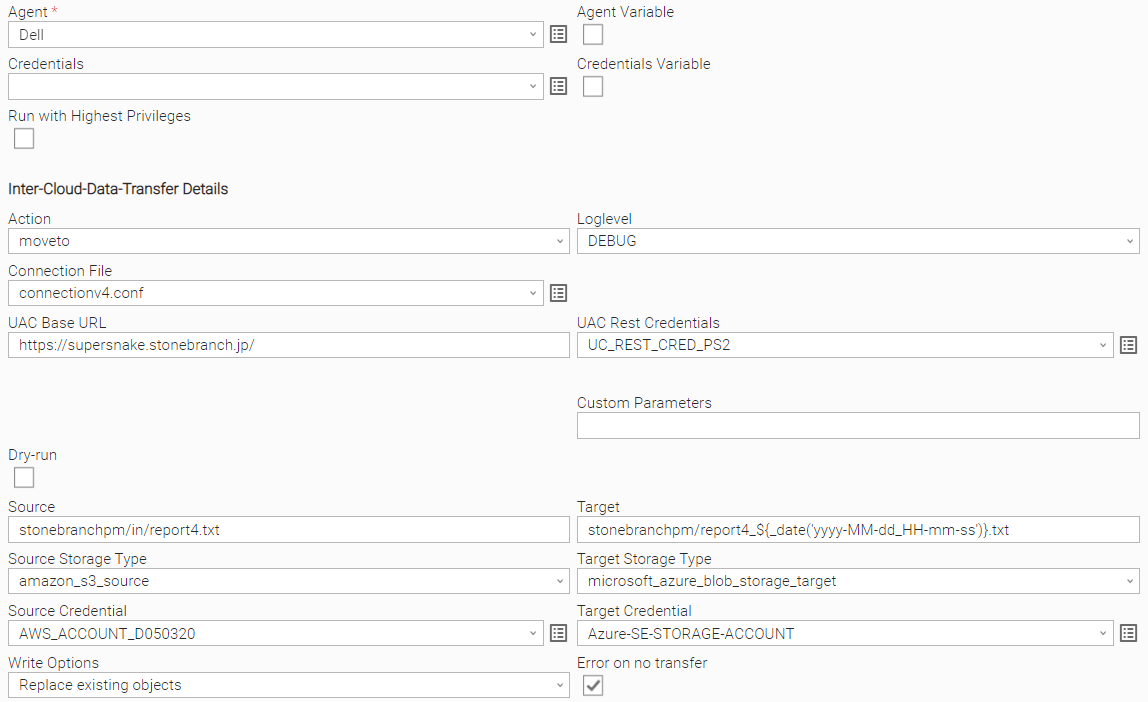

Action: moveto

The Action moveto, moves a single object from source to target and allows to rename the object on the target.

Before running a move, moveto, copy, copyto, or sync command, you can always try the command by setting the Dry-run option in the Task.

Field | Description |

Agent | Linux or Windows Universal Agent to execute the Rclone command line. |

Agent Cluster | Optional Agent Cluster for load balancing. |

Action | [ list directory, copy, copyto, list objects, move, moveto, sync, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] Move objects from source to target. |

error-on-no-transfer | The error-on-no-transfer flag lets the task fail in case no transfer was done. |

Source Storage Type | Select a source storage type:

|

Target storage Type | Select the target storage type:

|

Connection File | In the connection file you configure all required Parameters and Credentials to connect to the Source and Target Cloud Storage System. For example, if you want to transfer a file from AWS S3 to Azure Blob Storage, you must would need to configure the connection Parameters for AWS S3 and Azure Blob Storage. For for details on how to configure the Connection File , refer to section Configure the Connection File.refer to section Configure the connection file |

Other Parameters | This field can be used to apply additional flag parameters to the selected action. For a list of all possible flags refer to: https://rclone.org/flags/ |

Dry-run | [ checked , unchecked ] Do a trial run with no permanent changes |

UAC Rest Credentials | Universal Controller Rest API Credentials |

UAC Base Basse URL | Universal Controller URL; for For example, https://192.168.88.40/uc |

Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL] |

Example

The following example list all aws s3 buckets in the AWS account configured in the cloud2cloud.conf file.

...

moves the file report4.txt from the amazon s3 bucket stonebranchpm folder in to the azure container stonebranchpm.

The file will be renamed at the target to: stonebranchpm/report4_${_date('yyyy-MM-dd_HH-mm-ss')}.txt; for example, report4_2022-05-16_08-00-29.txt

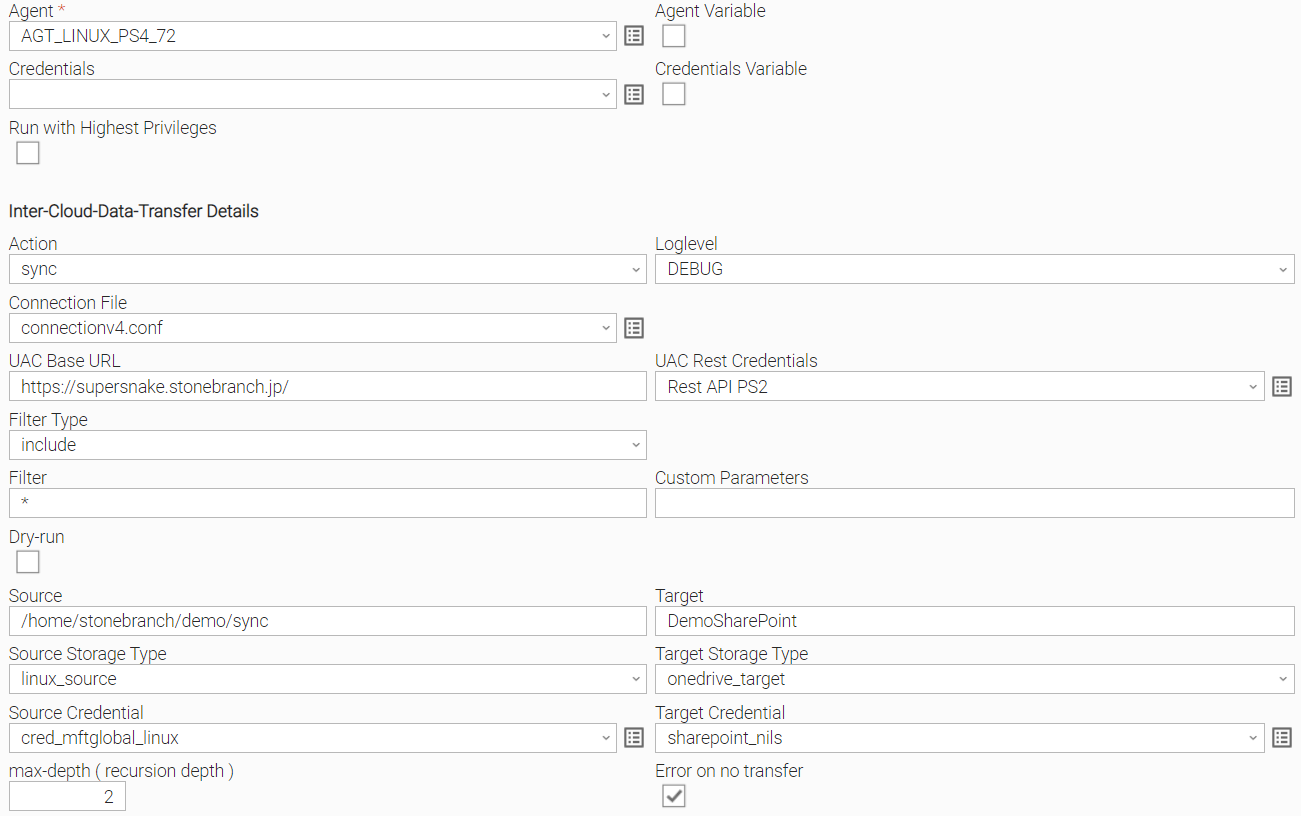

Action: sync

Before running a move, moveto, copy, copyto, or sync command, you can always try the command by setting the Dry-run option in the Task.

Field | Description |

Agent | Linux or Windows Universal Agent to execute the Rclone command line. |

Agent Cluster | Optional Agent Cluster for load balancing. |

Action | |

| Field | Description |

Agent | Linux or Windows Universal Agent to execute the Rclone command line. |

Agent Cluster | Optional Agent Cluster for load balancing. |

Action | [ list directory, copy, list objects, move, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] List objects in an OS directory or cloud object store. |

| Storage Type | [ list directory, copy, copyto, list objects, move, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] Copy objects from source to target. |

Source | Enter a source storage Type name as defined in the Connection File; for example, amazon_s3, microsoft_azure_blob_storage, hdfs, onedrive, linux .. For a list of all possible storage types, refer to Overview of Cloud Storage Systems. |

Target | Enter a target storage Type name as defined in the Connection File; for example, amazon_s3, microsoft_azure_blob_storage, hdfs, onedrive, linux .. For a list of all possible storage types, refer to Overview of Cloud Storage Systems. |

Connection File | In the connection file you configure all required Parameters and Credentials to connect to the Source and Target Cloud Storage System. For example, if you want to transfer a file from AWS S3 to Azure Blob Storage, you must configure the connection Parameters for AWS S3 and Azure Blob Storage. For details on how to configure the Connection File, refer to section Configure the Connection File. |

Filter Type | [ include, exclude, none ] Define the type of filter to apply. |

Filter | Provide the Patterns for matching file matching; for example, in a copy action: Filter Type: include Filter report[1-3].txt This means all reports with names matching For more examples on the filter matching pattern, refer to Rclone Filtering. |

Other Parameters | This field can be used to apply additional flag parameters to the selected action. For a list of all possible flags, refer to Global Flags. Eample: To Skip files that are newer on the destination during a move or copy action, you could add the flag |

Dry-run | [ checked , unchecked ] Do a trial run with no permanent changes. |

UAC Rest Credentials | Universal Controller Rest API Credentials |

UAC Base URL | Universal Controller URL For example, https://192.168.88.40/uc |

Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL] |

Example

The following example copies all file starting with report4 from the amazon s3 bucket stonebranchpmtest to the azure container stonebranchpm.

Action: list objects

...

moveto, sync, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] Sync the source to the destination, changing the destination only. | |

Write Options | [Do not overwrite existing objects, Replace existing objects, Create new with timestamp if exists, Use Custom Parameters} Do not overwrite existing objects Objects on the target with the same name will not be overwritten. Replace existing objects Objects on the target with the same name will not be overwritten - even if the same object exists on the target. Create new with timestamp if exists If an Object on the target with the same name exists the object will be duplicated with a timestamp added to the name. The file extension will be kept:

For example: report1.txt exists on the target, then a new file with a timestamp as postfix will be created on the target; for example, report1_20220513_093057.txt. Replace existing objects Objects are always overwritten, even if same object exists already on the target. Use Custom Parameters Only the parameters in the field “Customer Parameters” will be applied (other Parameters will be ignored). |

error-on-no-transfer | The error-on-no-transfer flag let the task fail in case no transfer was done. |

Source Storage Type | Select a source storage type:

|

Target storage Type | Select the target storage type:

|

Connection File | In the connection file you , configure all required Parameters and Credentials to connect to the Source and Target Cloud Storage System. For example, if you want to transfer a file from AWS S3 to Azure Blob Storage, you must configure the connection Parameters for AWS S3 and Azure Blob Storage. For details on how to configure the Connection File, refer to section Configure the Connection Fileconnection file. |

Filter Type | [ include, exclude, none ] Define the type of filter to apply. |

Filter | Provide the Patterns for matching file matching; for example, in a copy sync action: Filter Type: include Filter reportr This means all reports with names matching For more examples on the filter matching pattern, refer to Rclone Filtering. |

Other Parameters | This field can be used to apply additional flag parameters to the selected action. For a list of all possible flags, refer to Global Flags. Example: To Skip files that are newer on the destination during a move or copy action, you could add the flag |

| Directory | Name of the directory you want to list the files in. For example, Directory: stonebranchpm/out would mean to list all objects in the bucket stonebranchpm folder out. |

| List Format | [ list size and path, list modification time, size and path, list objects and directories, list objects and directories (Json) ] The Choice box specifies how the output should be formatted. |

UAC Rest Credentials | Universal Controller Rest API Credentials |

UAC Base URL | Universal Controller URL For example, https://192.168.88.40/uc |

Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL] |

Example

The following example lists all objects starting with report in the s3 bucket stonebranchpm.

Other Parameters | This field can be used to apply additional flag parameters to the selected action. For a list of possible flags, refer to https://rclone.org/flags/. |

Dry-run | [ checked , unchecked ] Do a trial run with no permanent changes. |

UAC Rest Credentials | Universal Controller Rest API Credentials. |

UAC Base URL | Universal Controller URL. For example, https://192.168.88.40/uc. |

Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL] |

max-depth ( recursion depth ) | Limits the recursion depth

Attention: If you change max-depth to a value greater than 1, a recursive action is performed. |

Example

The following example synchroizes all files and folders from the Linux source: /home/stonebranch/demo/sync to the target SharePoint folder DemoSharePoint.

One Level of subfolders and files in the directory /home/stonebranch/demo/sync will be considered for the sync ation, because max-depth is set to 2. max-depth 2 means that the current folder plus one subfolder level is synced from the source to the target.

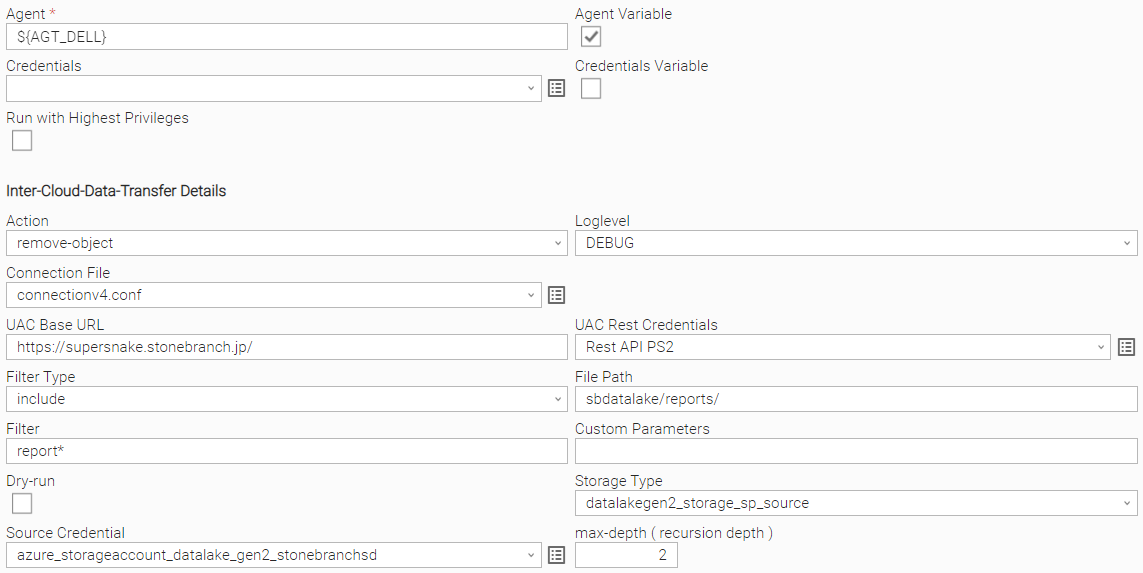

Action: remove-object

Source

| Field | Description |

Agent | Linux or Windows Universal Agent to execute the Rclone command line. |

Agent Cluster | Optional Agent Cluster for load balancing. |

Action | [ list directory, copy, copyto, list objects, move, moveto, sync, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] |

Move objects from source to target.

Remove objects in an OS directory or cloud object store. | |

Storage Type | Select the storage type:

|

|

For a list of all possible storage types, refer to Overview of Cloud Storage Systems.

Target

Enter a target storage Type name as defined in the Connection File; for example,

amazon_s3, microsoft_azure_blob_storage, hdfs, onedrive, linux ..

For a list of all possible storage types, refer to Overview of Cloud Storage Systems.

Connection File

In the connection file you configure all required Parameters and Credentials to connect to the Source and Target Cloud Storage System.

For example, if you want to transfer a file from AWS S3 to Azure Blob Storage, you must configure the connection Parameters for AWS S3 and Azure Blob Storage.

For details on how to configure the Connection File, refer to section Configure the Connection File.

Filter Type

[ include, exclude, none ]

Define the type of filter to apply.

Filter

Provide the Patterns for matching file matching; for example, in a copy action:

Filter Type: include

Filter report[1-3].txt means all reports with names matching report1.txt and report2.txt will be copied.

For more examples on the filter matching pattern, refer to Rclone Filtering.

Other Parameters

This field can be used to apply additional flag parameters to the selected action.

For a list of all possible flags, refer to Global Flags.

Example:

To Skip files that are newer on the destination during a move or copy action, you could add the flag --update.

Dry-run

[ checked , unchecked ]

Do a trial run with no permanent changes.

UAC Rest Credentials

Universal Controller Rest API Credentials

UAC Base URL

Universal Controller URL

For example, https://192.168.88.40/uc

Loglevel

Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL]

Example

The following example moves the object report1.txt from the source s3 bucket stonebranchpmtest to the target s3 bucket stonebranchpmtest2.

Action: remove-object

...

Agent

...

Linux or Windows Universal Agent to execute the Rclone command line.

...

Agent Cluster

...

Optional Agent Cluster for load balancing.

...

Action

...

[ list directory, copy, list objects, move, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ]

Remove objects in an OS directory or cloud object store.

...

Storage Type

...

Enter a storage Type name as defined in the Connection File; for example,

amazon_s3, microsoft_azure_blob_storage, hdfs, onedrive, linux ..

For a list of all possible storage types, refer to Overview of Cloud Storage Systems.

...

Path to the directory in which you want to remove the objects.

For example:

File Path: stonebranchpmtest

Filter: report[1-3].txt

This removes all s3 objects matching the filter: report[1-3].txt( report1.txt, report2.txt and report3.txt ) from the s3 bucket stonebranchpmtest.

...

Connection File

...

In the connection file you configure all required Parameters and Credentials to connect to the Source and Target Cloud Storage System.

For example, if you want to transfer a file from AWS S3 to Azure Blob Storage, you must configure the connection Parameters for AWS S3 and Azure Blob Storage.

For details on how to configure the Connection File, refer to section Configure the Connection File.

...

Other Parameters

...

This field can be used to apply additional flag parameters to the selected action.

For a list of all possible flags, refer to Global Flags.

...

Dry-run

...

[ checked , unchecked ]

Do a trial run with no permanent changes.

...

UAC Rest Credentials

...

Universal Controller Rest API Credentials

...

UAC Base URL

...

Universal Controller URL

For example, https://192.168.88.40/uc

...

Loglevel

...

Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL]

Example

The following example removes all s3 objects matching the filter: report[1-3].txt

( report1.txt, report2.txt and report3.txt ) from the s3 bucket stonebranchpmtest.

Action: remove-object-store

Agent

Linux or Windows Universal Agent to execute the Rclone command line.

Agent Cluster

Optional Agent Cluster for load balancing.

Action

[ list directory, copy, list objects, move, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ]

Remove an OS directory or cloud object store.

Storage Type

Enter a storage Type name as defined in the Connection File; for example,

amazon_s3, microsoft_azure_blob_storage, hdfs, onedrive, linux ..

For a list of all possible storage types, refer to Overview of Cloud Storage Systems

| |

| File Path | Path to the directory in which you want to remove the objects. For example: File Path: stonebranchpmtest Filter: report[1-3].txt This removes all S3 objects matching the filter: report[1-3].txt( report1.txt, report2.txt and report3.txt ) from the S3 bucket stonebranchpmtest. |

Filter Type | [ include, exclude, none ] Define the type of filter to apply. |

Filter | Provide the Patterns for file matching; for example, in a sync action: Filter Type: include Filter r This means all reports with names matching For more examples on the filter matching pattern, refer to https://rclone.org/filtering/ . |

Connection File | In the connection file you configure all required Parameters and Credentials to connect to the Source and Target Cloud Storage System. For example, if you want to transfer a file from AWS S3 to Azure Blob Storage, you must configure the connection Parameters for AWS S3 and Azure Blob Storage. For details on how to configure the Connection File, refer to section 137232401. |

Other Parameters | This field can be used to apply additional flag parameters to the selected action. For a list of all possible flags, refer to Global Flags. Recommendation: Add the flag max-depth 1 to all Copy, Move, remove-object and remove-object-store in the task field Other Parameters to avoid a recursive action. Attention: If the flag max-depth 1 is not set, a recursive action is performed. |

Dry-run | [ checked , unchecked ] Do a trial run with no permanent changes. |

UAC Rest Credentials | Universal Controller Rest API Credentials. |

UAC Base URL | Universal Controller URL. For example, https://192.168.88.40/uc |

Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL]. |

max-depth ( recursion depth ) | Limits the recursion depth

Attention: If you change max-depth to a value greater than 1, a recursive action is performed. |

Example

The following example removes all s3 objects starting with report* from the Azure container sbdatalake folder reports.

If the folder reports would contain a subfolder with an object starting with report, then that object would also be deleted, because max-depth is set to 2. max-depth 2 means that the current folder plus one subfolder level is in scope for the remove-object action.

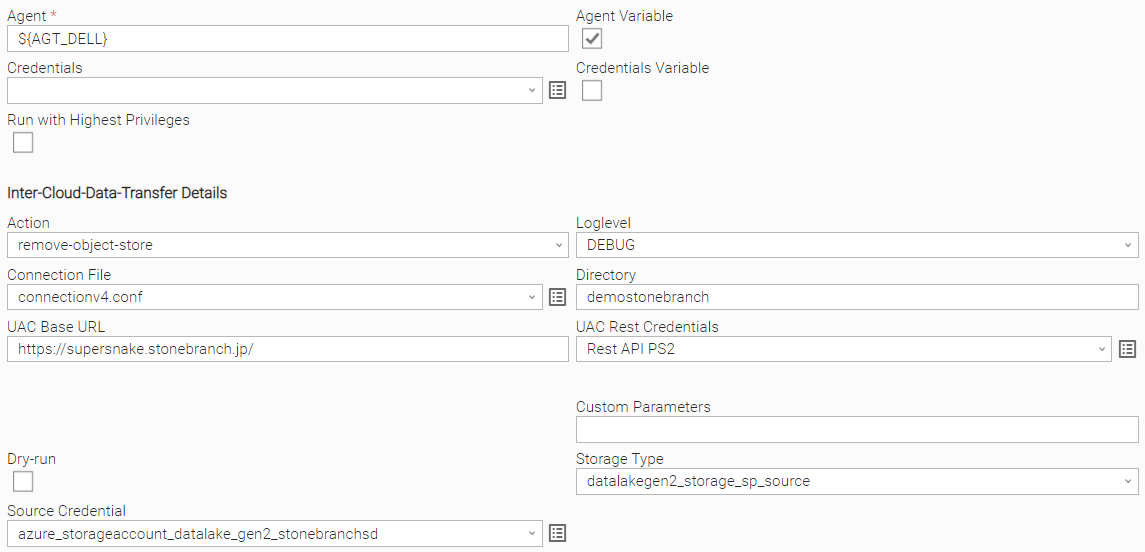

Action: remove-object-store

| Field | Description |

Agent | Linux or Windows Universal Agent to execute the Rclone command line. |

Agent Cluster | Optional Agent Cluster for load balancing. |

Action | [ list directory, copy, copyto, list objects, move, moveto, sync, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] Remove an OS directory or cloud object store. |

Storage Type | Select the storage type:

|

| Source Credential | Credential used for the selected Storage Type. |

| Directory | Name of the directory you want to list the files inremove. The directory can be an object store or a file system OS directory. The directory needs to be empty before it can be removed. For example, Directory: stonebranchpm/out would mean to list all objects in the bucket stonebranchpm folder outstonebranchpmtest would remove the bucket stonebranchpmtest. |

Connection File | In the connection file, you configure all required Parameters and Credentials to connect to the Source and Target Cloud Storage System. For example, if you want to transfer a file from AWS S3 to Azure Blob Storage, you must configure the connection Parameters for AWS S3 and Azure Blob Storage. For details on how to configure the Connection File, refer to section Configure the Connection File 137232401. |

Other Parameters | This field can be used to apply additional flag parameters to the selected action. For a list of all possible flags, refer to Global Flags. |

Dry-run | [ checked , unchecked ] Do a trial run with no permanent changes. |

UAC Rest Credentials | Universal Controller Rest API Credentials. |

UAC Base URL | Universal Controller URL For example, https://192.168.88.40/uc |

Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL] |

Example

The following example removes the s3 object store stonebranchpmtest.

Action: create-object-store

| Field | Description | |

Agent | Linux or Windows Universal Agent to execute the Rclone command line. | |

Agent Cluster | Optional Agent Cluster for load balancing. | |

Action | [ list directory, copy, list objects, move, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] Create an OS directory or cloud object store. | |

Storage Type | Enter a storage Type name as defined in the Connection File; for example, amazon_s3, microsoft_azure_blob_storage, hdfs, onedrive, linux .. For a list of all possible storage types, refer to Overview of Cloud Storage SystemsURL | Universal Controller URL. For example, https://192.168.88.40/uc |

Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL]. |

Example

The following example removes the Azure container demostonebranch.

The container must be empty in order to remove it.

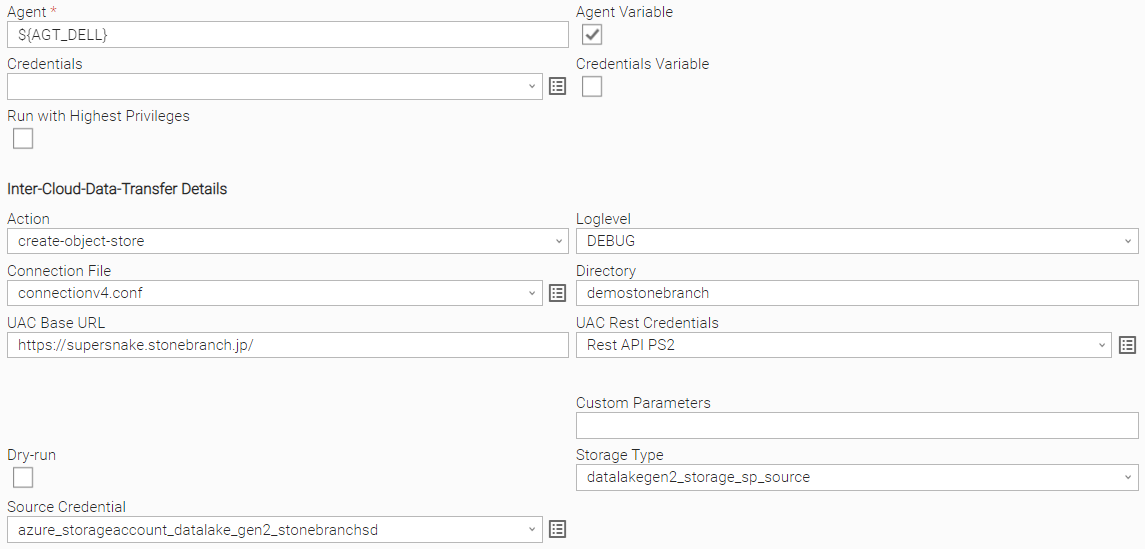

Action: create-object-store

| Field | Description |

Agent | Linux or Windows Universal Agent to execute the Rclone command line. |

Agent Cluster | Optional Agent Cluster for load balancing. |

Action | [ list directory, copy, copyto, list objects, move, moveto, sync, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] Create an OS directory or cloud object store. |

Storage Type | Select the storage type:

|

| Source Credential | Credential used for the selected Storage Type. |

Connection File | In the connection file, you configure all required Parameters and Credentials to connect to the Source and Target Cloud Storage System. For example, if you want to transfer a file from AWS S3 to Azure Blob Storage, you must configure the connection Parameters for AWS S3 and Azure Blob Storage. For details on how to configure the Connection File, refer to section |

| Directory | Name of the directory you want to create. The directory can be an object store or a file system OS directory. For example, Directory: stonebranchpmtest would create the bucket stonebranchpmtest. |

Other Parameters | This field can be used to apply additional flag parameters to the selected action. For a list of all possible flags, refer to Global Flags. |

Dry-run | [ checked , unchecked ] Do a trial run with no permanent changes. |

UAC Rest Credentials | Universal Controller Rest API Credentials. |

UAC Base URL | Universal Controller URL. For example, https://192.168.88.40/uc |

Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL]. |

Example

The following example creates the s3 bucket stonebranchpmtestAzure container demostonebranch.

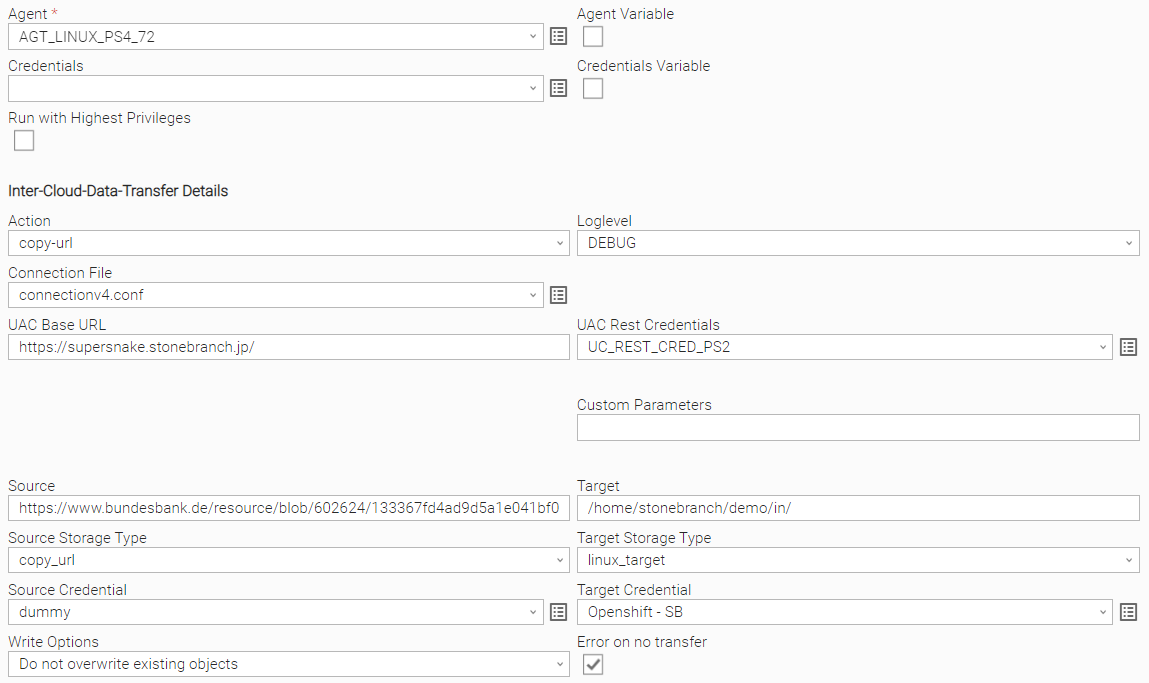

Action: copy-url

| Field | Description |

Agent | Linux or Windows Universal Agent to execute the Rclone command line. |

Agent Cluster | Optional Agent Cluster for load balancing. |

Action | [ list directory, copy, copyto, list objects, move, moveto, sync, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] Download a URL's content and copy it to the destination without saving it in temporary storage. |

Source | URL parameter. |

Enter a source storage Type name as defined in the Connection File; for example,

amazon_s3, microsoft_azure_blob_storage, hdfs, onedrive, linux ..

Download a URL's content and copy it to the destination without saving it in temporary storage. | |

| Storage Type | For the action copy_url, the value must be copy_url. |

Source Credential | Credential used for the selected Storage Type |

Target | Enter a target storage |

type name as defined in the Connection File |

. For example, |

amazon_s3, microsoft_azure_blob_storage, hdfs, onedrive, linux .. For a list of all possible storage types, refer to Overview of Cloud Storage Systems. | |

Connection File | In the connection file you configure all required Parameters and Credentials to connect to the Source and Target Cloud Storage System. For example, if you want to transfer a file from AWS S3 to Azure Blob Storage, you must configure the connection Parameters for AWS S3 and Azure Blob Storage. For details on how to configure the Connection File, refer to section |

Other Parameters | This field can be used to apply additional flag parameters to the selected action. For a list of all possible flags, refer to Global Flags. Useful parameters for the copy-url command:

| |||||||

Dry-run | [ checked , unchecked ] Do a trial run with no permanent changes. | |||||||

UAC Rest Credentials | Universal Controller Rest API Credentials | |||||||

UAC Base URL | Universal Controller URL For example, https://192.168.88.40/uc | |||||||

Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL] |

Example

The following example downloads a PDF file:

- From the webaddress: https:

...

Tto the linux folder: /home/stonebranch/demo/in

The linux folder is located on the server where the Agent ${AGT_LINUX} runs.

Action: monitor-object

...

Agent

...

Linux or Windows Universal Agent to execute the Rclone command line.

...

Agent Cluster

...

Optional Agent Cluster for load balancing.

...

Action

...

[ list directory, copy, list objects, move, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ]

Monitor a file or object in an OS directory or cloud object store.

...

Storage Type

Enter a storage Type name as defined in the Connection File; for example,

amazon_s3, microsoft_azure_blob_storage, hdfs, onedrive, linux ..

...

- //www.bundesbank.de/resource/../blz-loeschungen-aktuell-data.pdf

- To the Linux folder: /home/stonebranch/demo/in

The Linux folder is located on the server where the Agent ${AGT_LINUX} runs.

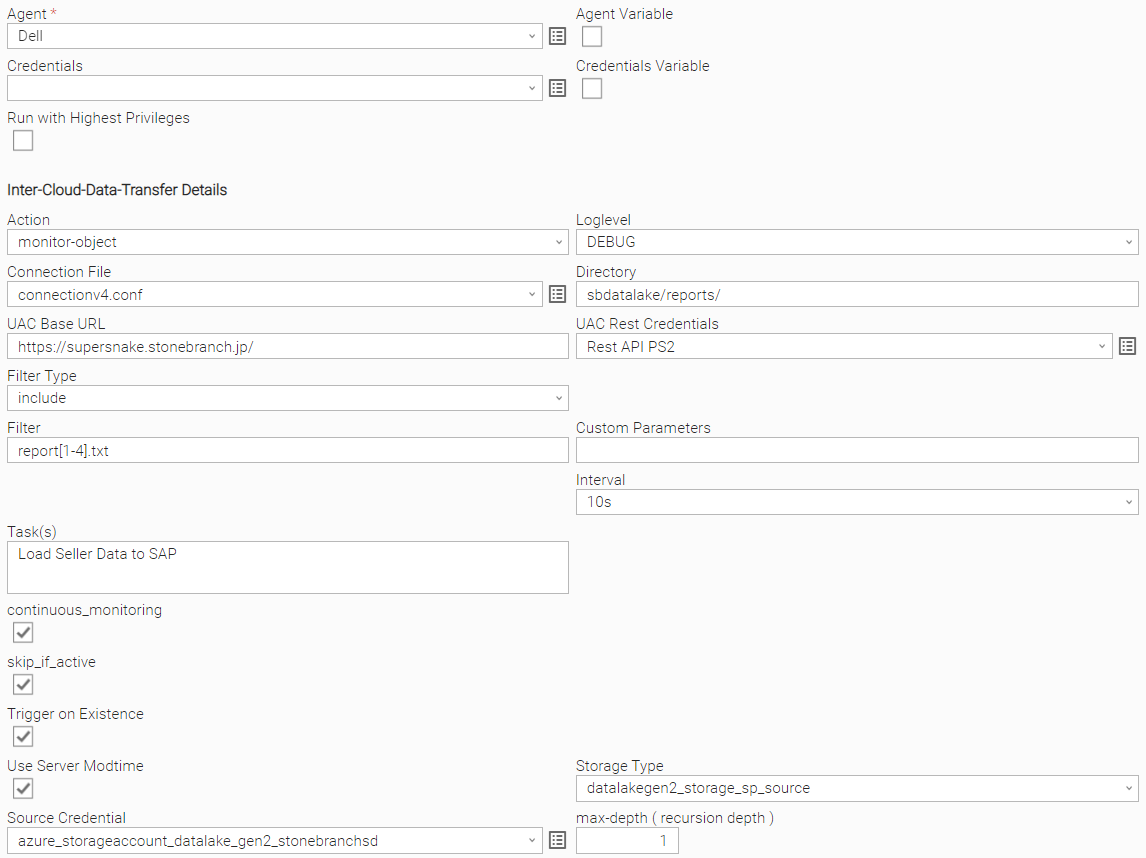

Action: monitor-object

| Field | Description | ||

Agent | Linux or Windows Universal Agent to execute the Rclone command line. | ||

Agent Cluster | Optional Agent Cluster for load balancing. | ||

Action | [ list directory, copy, list objects, move, remove-object, remove-object-store, create-object-store, copy-url, monitor-object ] Monitor a file or object in an OS directory or cloud object store. | ||

Storage Type | Select the storage type:

| ||

| Source Credential | Credential used for the selected Storage Type | ||

| Directory | Name of the directory to scan for the files to monitor. The directory can be an object store or a file system OS directory. For example: Directory: stonebranchpm/out This would monitor in the s3 bucket folder stonebranchpm/out for the object report1.txt. | ||

Connection File | In the connection file you configure all required Parameters and Credentials to connect to the Source and Target Cloud Storage System. For example, if you want to transfer a file from AWS S3 to Azure Blob Storage, you must configure the connection Parameters for AWS S3 and Azure Blob Storage. For details on how to configure the Connection File, refer to section Configure the Connection File 137232401. | ||

Filter Type | [ include, exclude, none ] Define the type of filter to apply. | ||

Filter | Provide the Patterns for matching file matching; for example, in a copy action: Filter Type: include Filter This means all reports with names matching For more examples on the filter matching pattern, refer to Rclone Filtering. | ||

Other Parameters | This field can be used to apply additional flag parameters to the selected action. For a list of all possible flags, refer to Global Flags. Example: To Skip files that are newer on the destination during a move or copy action, you could add the flag | Dry-run | [ |

| continuous monitoring | [ checked , unchecked] After the monitor has identified a matching file it continuous to monitor for new files. | ||

| skip_if_active | checked , unchecked] | Do a trial run with no permanent changesIf the monitor has launched a Task(s), it will only launch a new Task(s) if the launched Tasks(s) are not active anymore. | |

Trigger on Existence | [ checked , unchecked] If checked, the monitor goes to success even if the file already exists when it was started.when it was started. | ||

| User Server Modtime | Use Server Modtime tells the Monitor to use the upload time to the AWS bucket instead of the last modification time of the file on the source (for example, Linux). | ||

| Tasks | Comma-separated list of Tasks, which will be launched when the montoring criteria are met. If the field is empty, no Task(s) will be launched. In this case, the Task acts as a pure monitor. For example, Task1,Task2,Task3 | ||

| Interval | [ 10s, 60s, 180s ] Monitor interval to check of the file(s) in the configured directory. For example, Interval: 60s, would be mean that every 60s, the task checks if the file exits in the scan directory. | ||

UAC Rest Credentials | Universal Controller Rest API Credentials | ||

UAC Base URL | Universal Controller URL For example, https://192.168.88.40/uc | Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL] For example, https://192.168.88.40/uc |

Loglevel | Universal Task logging settings [DEBUG | INFO| WARNING | ERROR | CRITICAL] | ||

max-depth ( recursion depth ) | Limits the recursion depth

Attention: If you change max-depth to a value greater than 1, a recursive action is performed. |

Example

The following example monitors s3 bucket folder stonebranchpm/out for the object report1.txt.

If the object is found, the monitor goes to success.

the Azure Datalake Gen2 storage container sbdatalake folder /reports/ for all object matching report[1-4].txt ( report1.txt, report2.txt, report3.txt, report4.txt).

The monitor will launch the configured Task: Load Seller Data to SAP If any of the matching objects already exists when the monitor is started ( Trigger on Exsitance is emabled).

After the monitor has identified a matching file, it continues to monitor for new files.

If the monitor has launched the Task Load Seller Data to SAP , it will only launch a new Task “Load Seller Data to SAP” if the launched Tasks is not active anymore.